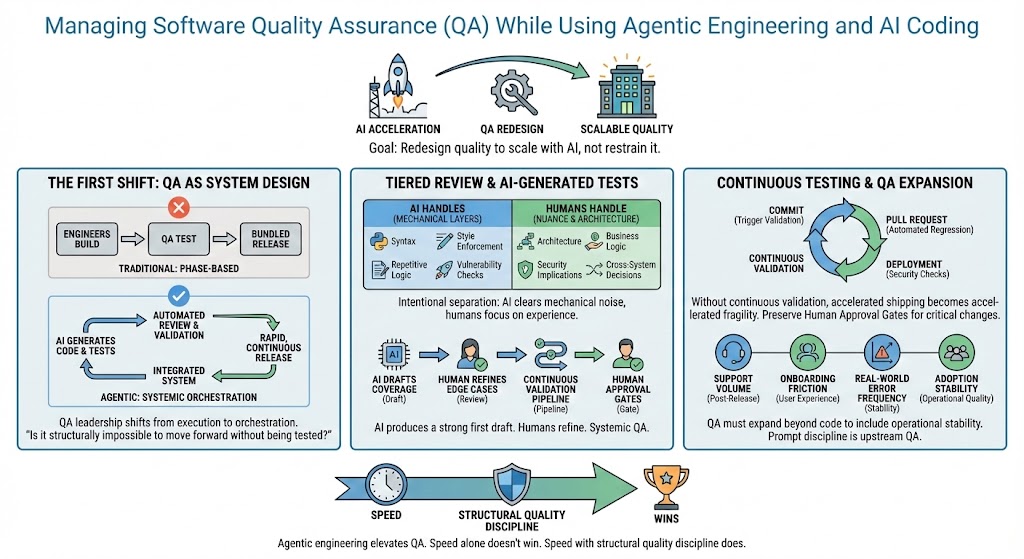

Managing Software Quality Assurance (QA) While Using Agentic Engineering and AI Coding

As AI coding accelerates development cycles, traditional QA models no longer hold up. In this article, Ryan Allis explores how SaaS leaders can redesign quality assurance for the agentic engineering era — shifting from manual testing to AI orchestration, continuous validation, and structured human oversight to maintain reliability without slowing innovation.

If your team is using AI to write code, generate tests, and refactor logic, you’ve probably felt the acceleration already.

Development cycles that used to take weeks now take days. Migrations that were once roadmap projects become short bursts of focused execution. Entire test suites can be scaffolded in minutes.

In fact, as one engineering leader described it, a migration that previously required “a month or two of work” was completed in just 24 hours — calling it “the most disruptive thing” they had seen in their careers .

That’s leverage.

But speed changes the risk equation.

If you don’t deliberately redesign how QA works inside an agentic engineering environment, you’ll either slow your team down unnecessarily or create instability that compounds quietly.

The goal is not to restrain AI.

The goal is to redesign quality so it scales with AI.

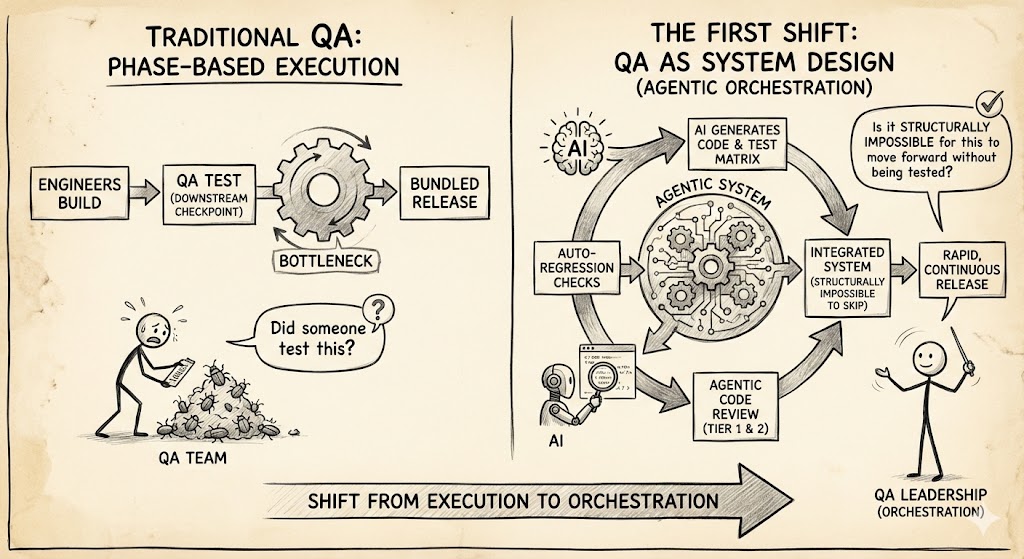

The First Shift: QA Becomes System Design

Traditional QA was phase-based.

Engineers built. QA tested. Releases were bundled and validated at the end. That sequencing assumed slower development cycles.

Agentic engineering collapses those cycles.

Teams are now building internal systems that automatically review code before humans ever see it. As one team described, “We’ve built an agentic code quality checker. It basically does code review… the agent does a ton of what you would call Tier 1 and Tier 2 code review” .

When AI can:

- Generate functional code

- Produce a draft test matrix

- Run regression checks automatically

- Create a pull request before a human reviews it

QA cannot remain a downstream checkpoint.

It must become an integrated system.

Instead of asking “Did someone test this?” the better question becomes:

“Is it structurally impossible for this to move forward without being tested?”

That’s the redesign.

QA leadership shifts from execution to orchestration.

Tiered Review: Let AI Handle the Mechanical Layers

AI is extremely strong at structured validation. Humans remain stronger at nuance.

The separation should be intentional.

- AI handles: syntax validation, repetitive logic review, style enforcement, common vulnerability checks.

- Humans handle: architecture, business logic, security implications, and cross-system decisions.

That’s exactly how high-performing teams are structuring it.

The AI clears the mechanical noise. Senior engineers focus on the work that truly requires experience.

Without that separation, teams either over-trust AI or waste its leverage.

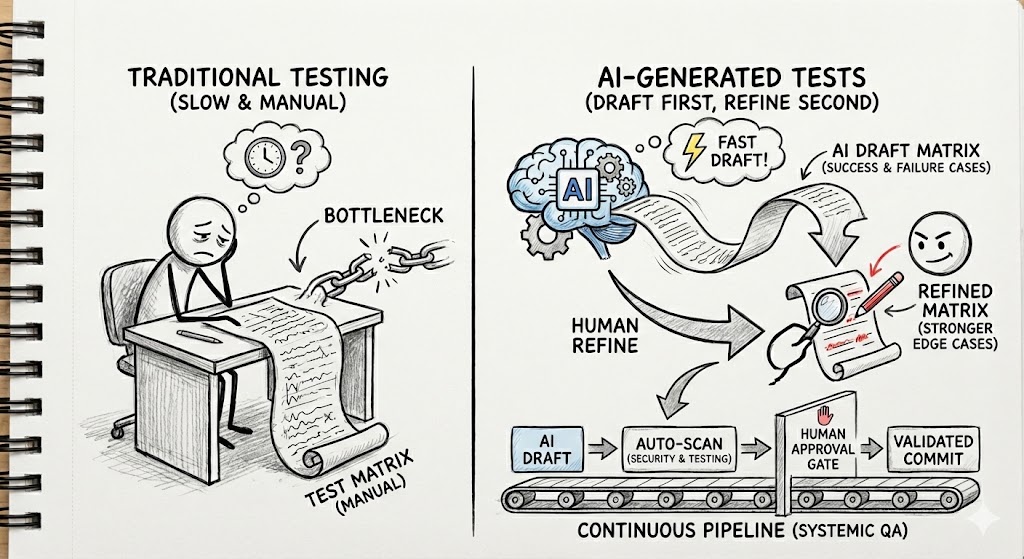

AI-Generated Tests: Draft First, Refine Second

AI is also rewriting how test coverage is built.

Teams are now telling AI systems: here’s the functionality, here are navigation paths, generate success and failure cases. The system builds the matrix automatically. As one engineering leader explained, “We told Cursor… go ahead and build both the successful and the failure cases. It essentially went and built that. About 40% of it was crap, but we were able to make some adjustments” .

That statement captures the right mindset.

AI produces a strong first draft. Humans refine.

The workflow becomes:

- AI drafts the coverage

- QA reviews and strengthens edge cases

- Continuous pipelines validate every commit

- Human approval gates remain in place

In many environments, cloud-hosted agents now automatically run security scans and testing before generating pull requests for approval .

That’s not just speed. That’s systemic QA.

Continuous Testing Is Now Mandatory

When development cycles compress from weeks to days, periodic QA becomes structurally misaligned.

Every commit must trigger validation.

Every pull request must pass automated regression.

Every deployment must flow through security checks.

Agentic engineering makes this technically feasible. Organizational discipline makes it effective.

Without continuous validation, accelerated shipping becomes accelerated fragility.

Preserve Human Approval Gates

Automation does not eliminate accountability.

Certain classes of change should always require deliberate human review: authentication, billing logic, database migrations, and critical workflow transitions.

Agentic engineering reduces execution time. It does not remove responsibility.

Clear approval gates prevent instability while preserving speed.

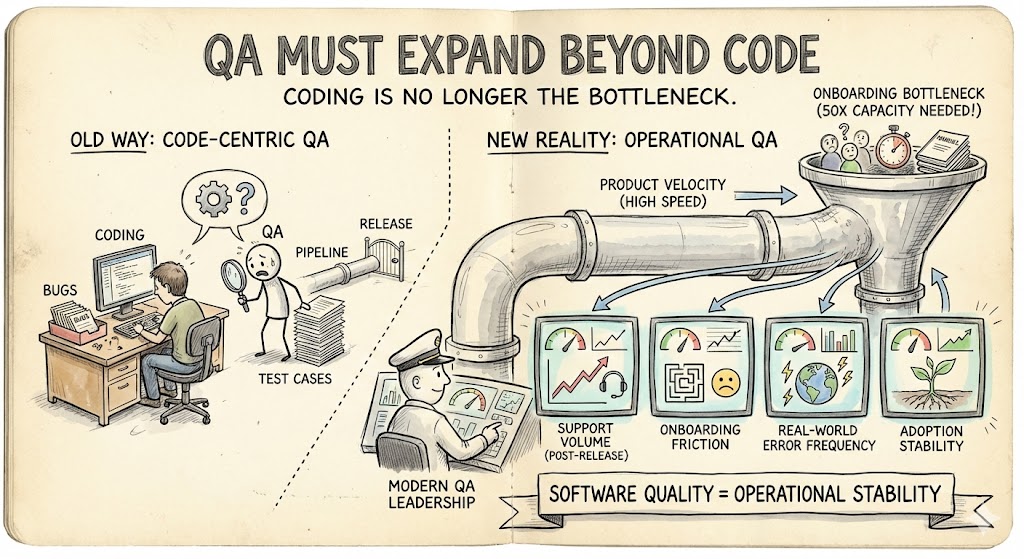

QA Must Expand Beyond Code

Interestingly, some teams are discovering that coding is no longer their bottleneck.

“Our big bottleneck in the company is actually not coding right now, it’s onboarding,” one leader noted, explaining the need to “50X our onboarding capacity” using AI tools .

That insight matters.

As product velocity increases, instability often shifts to onboarding, documentation, and user experience consistency.

Modern QA leadership must monitor:

- Support volume post-release

- Onboarding friction

- Real-world error frequency

- Adoption stability

Software quality now includes operational stability.

Prompt Discipline Becomes a QA Lever

In an AI-driven system, instructions determine output quality.

One team described building “Claude skills as MD files,” collaboratively maintained by PMs and engineers, where changes are implemented by simply adjusting prompts and rerunning the system .

That is upstream QA.

If prompts are weak, output is unstable. If prompt frameworks are disciplined, quality improves over time.

QA now influences the system before code even exists.

The Bottom Line

Agentic engineering doesn’t reduce the need for QA.

It elevates it.

As one leader warned, early adopters will “beat our competition” because of the leverage these systems create .

Speed alone doesn’t win.

Speed with structural quality discipline does.