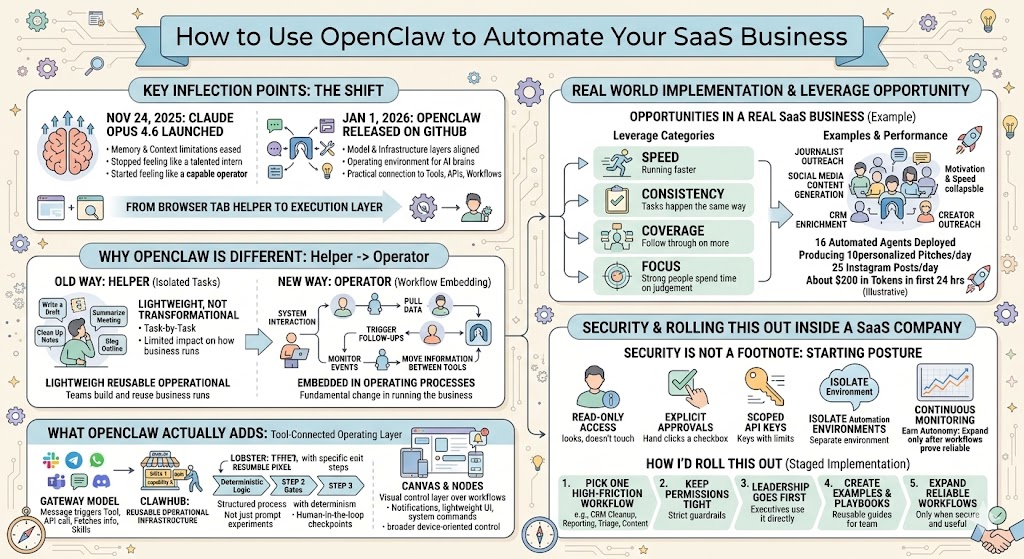

How to Use OpenClaw to Automate Your SaaS Business

A founder-focused look at how OpenClaw, Portal.ai, and Viktor can be used inside a SaaS company to automate real workflows across marketing, operations, and product. This article explores where these tools create real leverage, how to think about security and adoption, and what it actually takes to turn AI from a helpful assistant into part of your company’s operating system.

There were two real inflection points that changed the game here.

- The first was November 24, 2025, when Claude launched Opus 4.6 and memory and context window limitations largely went away. That was a much bigger moment than most people realized at the time. Before that, AI could be impressive, but it still had one foot stuck in the “smart assistant” category. It could help with a task, but it often lost the broader context, forgot what mattered, or required too much manual steering to be trusted with anything substantial. Once memory got better and the context limitations loosened up, the model stopped feeling like a talented intern and started feeling more like a capable operator.

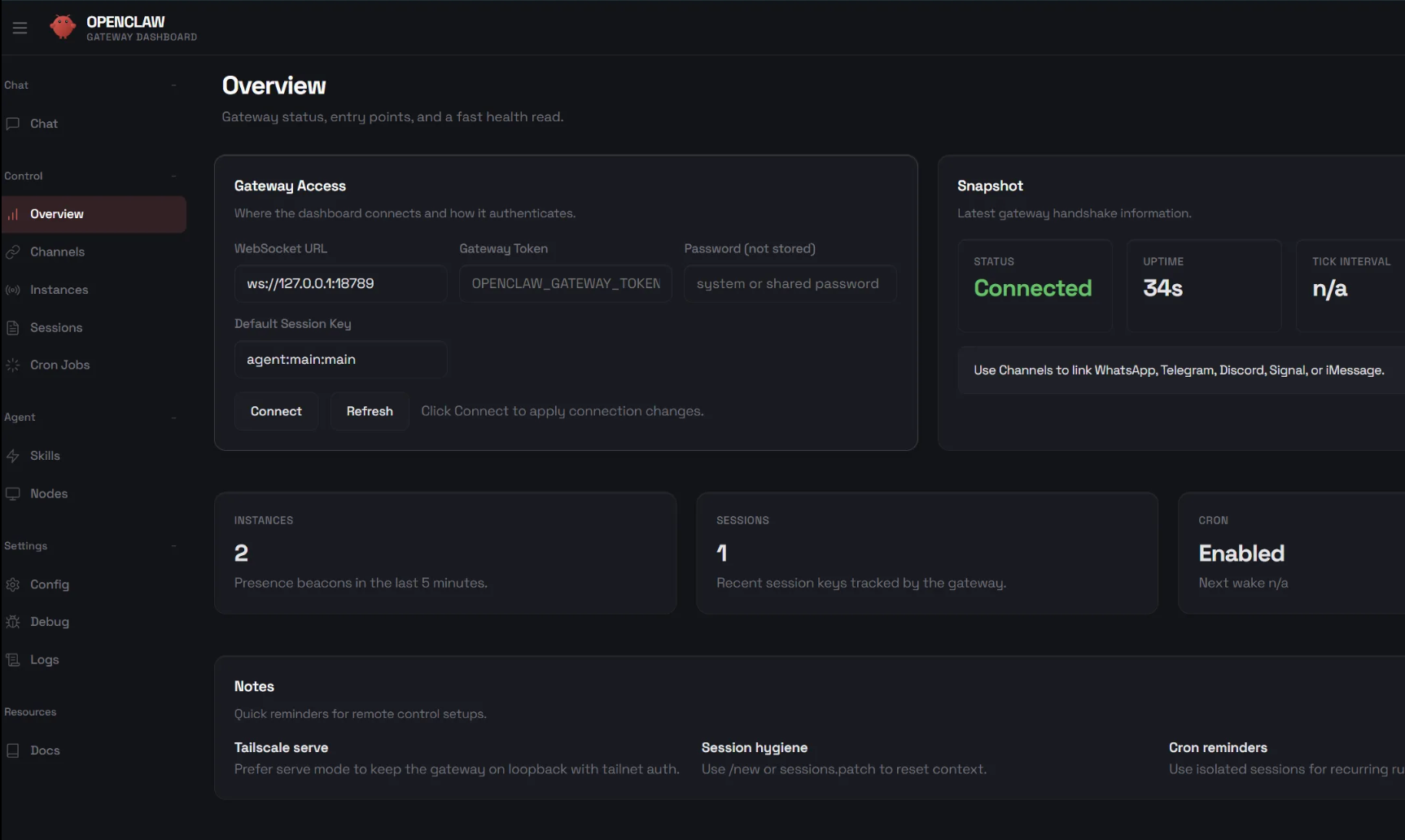

- The second was January 1, 2026, when OpenClaw was released on GitHub. That was the moment the infrastructure layer started catching up to the model layer. Now it wasn’t just that the brains were getting smarter. It was that the operating environment for those brains was getting far more useful. You could connect AI to chat interfaces, tools, APIs, workflows, devices, and approval layers in a way that made it much more practical inside a real business.

Put those two things together and you get a meaningful shift. AI stopped being just a browser tab you open when you need help, and started becoming something much closer to an execution layer inside the company.

That is the real change. And I think most founders are still underestimating just how important it is.

AI Is Moving From Helper to Operator

For the last two years, most of the business use cases people talked about were lightweight. Write a draft. Summarize a meeting. Clean up notes. Generate a blog outline. Those are all useful, and I use AI for those things all the time, but they are not transformational by themselves. They make individual tasks a little faster, but they do not fundamentally change how the business runs.

What starts to matter more is when AI moves from being a helper on isolated tasks to being embedded in actual company workflows. That means it is not just generating text, but interacting with systems, pulling data, triggering follow-ups, monitoring events, moving information between tools, and participating in repeatable operating processes.

That is where OpenClaw gets interesting.

OpenClaw is not just another AI chat wrapper. It is a self-hosted gateway and workflow layer that connects AI agents to the places your team already works. It supports chat channels like Slack, Telegram, WhatsApp, Discord, iMessage, Teams, and others, and then allows those messages to route into agents, skills, tools, and structured workflows behind the scenes.

That may sound technical, but the practical implication is simple. Instead of requiring your team to adopt one more app, one more interface, and one more dashboard, you can bring the AI operator layer into the systems where people already spend their time. That is a big deal, because the best software in the world still fails when it asks a team to change too much behavior too quickly.

OpenClaw is built around the opposite idea. It says: let the assistant meet the team where they already are, and then connect it to the systems that allow it to actually do work.

That is why this category feels different to me from the usual AI hype cycle. This is not mainly about getting better outputs from prompts. It is about shrinking the gap between intention and execution.

The Real Opportunity Is Operational Leverage

Most growth-stage SaaS companies do not have a strategy problem. They have an execution problem.

The leadership team usually knows what needs to happen. They know the campaigns that should be live already. They know the follow-up sequences that should be tighter. They know the reporting should be cleaner, the CRM should be more structured, the customer notes should be more consistent, the content production engine should move faster, and the support feedback loops should get back to product much more quickly. None of this is usually mysterious.

The bottleneck is that there is always more that should get done than the team has clean capacity to execute.

That is why I think the real opportunity with tools like OpenClaw is not novelty. It is leverage.

When AI is connected to APIs, workflows, and systems, it stops acting like a writing assistant and starts acting like an execution layer. It can help bridge the gap between “we should do this” and “this is now happening automatically or semi-automatically inside the business.” That is where the ROI starts getting real.

You can think of the opportunity in a few buckets:

- Speed: getting work done faster

- Consistency: having tasks happen the same way every time

- Coverage: following through on more things that currently fall through the cracks

- Focus: allowing high-judgment humans to spend more time on the work that actually requires judgment

That last point matters a lot. I do not think the future of work is about replacing people. I think it is about giving strong people far more leverage. The best operators inside your company should be spending less time pushing information from one tool to another and more time on decisions, creativity, customer understanding, and strategic pattern recognition.

If AI helps create that shift, it becomes enormously valuable.

What OpenClaw Actually Adds Beyond a Normal LLM

This is where it helps to get concrete.

A lot of people hear about something like OpenClaw and assume it is just a chat interface on top of an LLM.

That undersells what is going on. The more useful frame is that OpenClaw is a tool-connected operating layer. It includes an onboarding flow, browser-based control UI, support for multiple model providers, a skills and plugins ecosystem, and a structured workflow shell that can turn loose agent behavior into more deterministic pipelines.

A few components stand out.

First, there is the gateway model itself. You can message the assistant through a familiar channel like Slack or Telegram, and instead of that message going nowhere beyond conversation, it can trigger a tool, call an API, fetch information, run a skill, or route into a workflow. That alone starts changing the nature of what the assistant can do.

Second, there is ClawHub, which functions as a public registry for skills and plugins. That matters because it pushes the system beyond one-off prompting and toward reusable operational capabilities. Once teams can build and reuse skills, they start creating actual internal infrastructure rather than scattered prompt experiments.

Third, there is Lobster, OpenClaw’s workflow shell. Lobster is designed to create typed, composable, resumable pipelines with approval checkpoints. That may sound like a sentence written for engineers, but the business implication is straightforward. Instead of telling an agent to “go do this” and hoping it behaves sensibly, you can define a more structured process with specific steps, deterministic logic, and human approval gates at the right moments.

That is the difference between clever automation and reliable automation.

There is also a visual and device-oriented layer through Canvas and nodes, which lets companion devices expose functionality like notifications, lightweight UI surfaces, system commands, and other controls. In practical terms, OpenClaw is becoming not just a text interface, but a broader control layer across workflows and environments.

That is why I would not describe it as just an AI app. I would describe it as the early version of an operating system for AI-enabled execution.

What It Looks Like in a Real Business

The most useful conversations I have seen around OpenClaw are the ones that stay grounded in actual implementation instead of drifting into AI futurism.

One example involved a team using hosted platforms like Portal.ai and Viktor, connected through Telegram and Slack, with 16 automated agents deployed inside the business. Those agents were handling things like journalist outreach, Instagram content generation, CRM enrichment through Apollo, and creator outreach. The system was producing 10 personalized journalist pitches per day and 25 Instagram posts per day, while burning through about $200 in tokens in the first 24 hours.

That example matters because it shows both sides of the story. The upside is real. The system can go from zero to meaningful output incredibly quickly. The discipline required is also real. If you let agents run without guardrails, cost and mess can accumulate just as quickly as productivity.

What jumps out most is the speed. One founder described starting in the evening and having personalized outreach firing off within about thirty minutes. That is a very different world from the old enterprise automation model, where meaningful implementation often took months, multiple vendors, and a painful amount of setup.

We are now in a world where a motivated operator can stand up a meaningful AI execution layer in a day.

That does not mean it will be mature in a day. It does not mean it will be safe in a day. It does not mean it will be optimized in a day. But it does mean the cost of experimentation has collapsed, and that changes who gets the advantage. The teams that win are no longer just the biggest or slowest-moving ones. They are the ones that can pair speed with judgment.

Where Portal.ai and Viktor Fit

It is also helpful to understand where some of the hosted options fit, because not every founder wants to self-host infrastructure right away.

The simplest way I think about the landscape is this.

OpenClaw is more self-hosted, control-oriented, and system-level. It appeals to teams that want flexibility, visibility, and ownership over the operating layer.

Portal.ai leans more into persistent memory, orchestration, and the idea of a personal AI environment that gets smarter and more personalized over time. It feels more like an orchestration-and-memory-first product.

Viktor positions itself more directly as an execution-focused AI coworker connected to tools and workflows. It is easier to explain to operators who care less about architecture and more about whether the system can actually move work forward.

The exact product you choose matters less than the broader point: founders are no longer simply picking a model. They are choosing an operating environment for how AI will live inside the company. That is a more important decision than many people realize, because the interface and workflow layer often determine whether the technology actually gets adopted.

Security Has to Be Built In Early

The moment AI starts touching email, CRM records, project systems, or internal tools, security stops being a footnote.

This is one reason OpenClaw is more interesting than many lightweight AI tools. Its documentation is explicit about the trust model. It is not pretending that giving a tool-enabled agent broad access automatically creates safe per-user authorization. It talks directly about channel access controls, session isolation, tool policies, transcript logging, execution sandboxing, and broader threat modeling.

That clarity matters because a lot of products in this category still speak in vague abstractions when they should be talking concretely about trust boundaries.

Still, the most important security posture is not what the product says. It is how you roll it out.

If I were deploying OpenClaw inside a SaaS company, I would want the initial posture to look something like this:

- start with read-only access where possible

- require explicit approvals before any send, post, update, or deploy action

- use separate accounts and scoped API keys

- isolate sensitive automation environments when appropriate

- monitor token usage, logs, and unusual behavior continuously

I have heard operators say things like, “I’m not comfortable giving AI full access to email yet,” and I think that is a healthy instinct. Another described running their AI stack on an isolated computer specifically to reduce security risk. That may or may not be necessary for every team, but the mindset is right.

The better way to do this is to earn your way into autonomy. Start narrow. Keep permissions tight. Add approval gates. Expand only when the workflow proves reliable and the trust boundary is well understood.

That is usually a faster path in the long run because it prevents expensive cleanup later.

The Biggest Bottleneck Is Still Adoption

A lot of leadership teams assume that once the tools exist, adoption will happen naturally. It usually does not.

The biggest bottleneck is not model quality. It is not APIs. It is not plugins. It is not whether the agent can theoretically do the work.

The biggest bottleneck is behavior change.

Inside most companies, a few people go deep on AI very quickly. They build real workflows, use it every day, and start thinking differently about work. Everyone else dabbles. They test a few prompts, maybe have one good experience, then drift back to old habits. Leadership then wonders why the company is not moving faster.

The answer is almost always that employees have not yet seen enough internal proof.

Real adoption does not happen because somebody sends an all-hands memo saying everyone should use AI more. It happens because people see teammates saving time, removing pain, shipping faster, and creating wins that are easy to copy. That is why leadership involvement matters so much. If executives are not using these tools directly, the rest of the company can feel that immediately. The whole initiative starts to feel like another software rollout instead of a real operating shift.

If you want adoption, a few things tend to work well:

- leadership using it firsthand

- internal examples shared in Slack

- short Loom walkthroughs of useful workflows

- monthly show-and-tells

- budget that allows experimentation without anxiety over token costs

- simple repeatable playbooks for common use cases

That last one matters more than it sounds. If every experiment feels like it requires permission, experimentation dies. Companies learn much faster when they remove both the technical friction and the psychological friction.

The Productivity Gains Can Be Very Real

One reason this category is moving so fast is that when it works, the gains are not subtle.

I have seen teams describe expectations doubling across the board. Things that used to take a week now take two days. Things that used to take a month now take a week. Product requests that previously took two or three months to ship can now be prototyped in a day and tested inside a week. Entire websites have been rebuilt in days. Vendor spend has been cut dramatically as companies bring more execution in-house with AI-assisted workflows and no-code tooling.

The important thing here is not any one anecdote. It is the pattern.

The value is not in a single magical task. It is in the cumulative effect of dozens of tasks getting faster, cleaner, and more consistent. Reporting gets easier. Outreach gets more systematic. Documentation improves. Content production accelerates. Internal knowledge becomes more accessible. Support loops get tighter. Product iteration speeds up.

That cumulative effect can be enormous.

It also changes the pace of learning. A company that can test faster can improve faster. A company that can move customer feedback into the product quickly does not just save time. It compounds its rate of learning relative to competitors.

That is why I think founders should stop framing this only as productivity software. It is also learning-rate software.

Don’t Marry the Interface

One of the healthiest perspectives to have right now is that this market is moving very fast and probably will keep moving very fast for the next few years.

What feels essential today may get absorbed by a model provider tomorrow. What feels like a breakthrough workflow layer today may look basic a year from now. The wrong move is to become emotionally attached to a specific interface or brand too early.

The smarter posture is to build around outcomes.

Use the best system for the job. Standardize where consistency matters. Stay flexible where the market is changing quickly. Recognize that different models may still be better at different tasks. One may be better for strategic thinking, another for structured workflow execution, another for coding, another for content.

That is one thing OpenClaw gets right. It supports multiple model providers rather than assuming the world will stay static. Strategically, that matters a lot. You do not want your entire AI operating layer to become brittle because the model market shifts again in six months.

How I’d Roll This Out Inside a SaaS Company

If I were implementing OpenClaw inside a growth-stage SaaS company today, I would not start with a big “AI transformation” initiative. Those usually create more theater than progress.

I would start with one painful, repetitive workflow that already costs the team too much time and deserves a better system. Maybe it is CRM cleanup. Maybe it is reporting. Maybe it is meeting prep, support triage, content production, or internal documentation. The exact workflow matters less than picking something narrow enough to control and important enough to matter.

Then I would run a staged rollout.

First, pick one high-friction workflow with clear business value. Second, keep permissions tight and use approval gates where needed. Third, have leadership use it directly before asking the team to adopt it. Fourth, create examples and lightweight playbooks the rest of the company can copy. Fifth, only expand after the workflow is reliable, secure, and worth scaling.

That is not the flashiest approach, but it is usually the one that works. It creates momentum without creating unnecessary chaos.

Final Thought

The companies that get the most out of OpenClaw and tools like it are not necessarily the ones with the fanciest tech stack. They are the ones with the clearest operating discipline.

They move early, but not recklessly. They experiment aggressively, but inside guardrails. They let leadership go first. They give teams examples to copy. They monitor cost. They protect access. They scale what proves useful.

That is the pattern.

OpenClaw matters because it points toward a future where AI is not just answering questions but actually moving work forward inside the company. It is more chat-native, more tool-connected, more structured, and more serious about workflows than the average AI wrapper. That makes it a useful signal of where this whole market is going.

And maybe the most important point is this: the goal is not to remove humans from the business. The goal is to increase the leverage of capable people so they can spend more of their time on judgment, creativity, and strategic work.

If founders hold onto that framing, they will make much better decisions about how to use this next wave of tools.