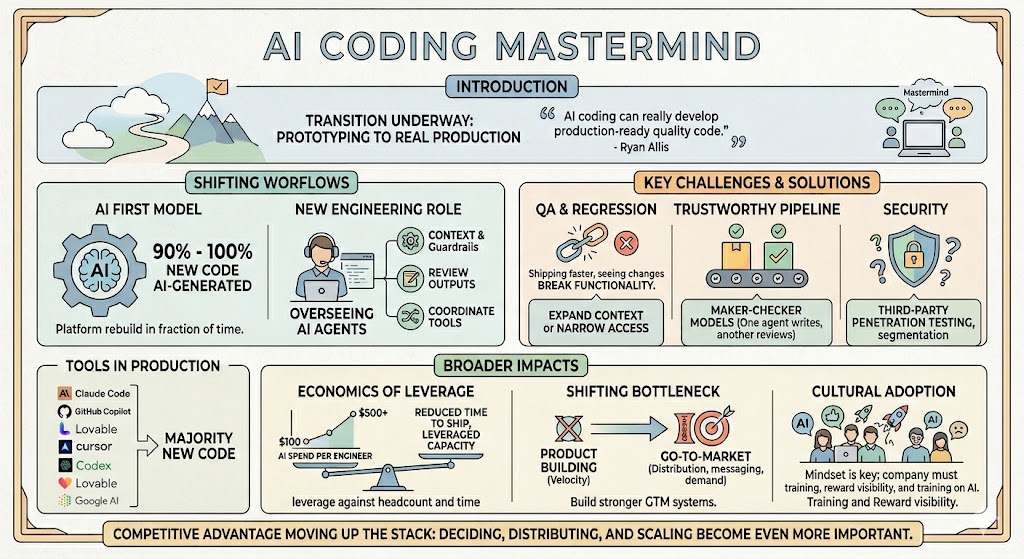

SaasRise Special CEO Mastermind on Agentic Engineering & AI Coding

A founder-level recap of the SaaS Rise mastermind on AI coding and agentic engineering, covering what teams are actually doing with AI today, where QA and security are still breaking, and why faster product development is shifting the real bottleneck to go-to-market.

Something shifted over the last few months, and you could feel it in this mastermind.

This was not one of those polite conversations where founders are still debating whether AI coding might eventually matter. This was a room full of SaaS operators comparing notes on a transition that is already underway. AI coding has moved from experimentation into real production workflows, and agentic engineering is starting to change how software teams actually operate.

Ryan Allis opened the session by framing that shift clearly. “AI coding can really develop production-ready quality code,” he said, describing just how different the current moment feels compared with the prototyping-heavy stage from earlier cycles of AI tooling. He also emphasized that this was meant to be collaborative, not a passive event: “This is designed as a pure mastermind. It’s not a webinar.”

That tone mattered, because the real value of the conversation came from hearing what founders and teams are already doing in the field: how much code AI is writing, where QA is still breaking, what security teams are worried about, what the spend looks like per engineer, and how engineering roles are being redefined in real time. The Zoom chat made it clear this was a broad and serious group, with SaaS leaders joining from across the U.S., Canada, and Europe.

The strongest takeaway from the session was simple: for many companies, the inflection point is no longer ahead of them. They believe they are already past it.

Ryan said, “We’ve hit an inflection point in the last six months,” and that line was reinforced repeatedly throughout the call. In the chat, he also answered a question about whether February felt like the moment things truly changed by saying, “Yes, the launch of Claude Opus 4.6 was the breakthrough.” Patrick van Staveren added that the frontier labs “all turned [the] corner around the end of 2025.”

That explains why the mood of the session was not theoretical. It was operational.

Several operators described teams where 90% to 100% of new code is now AI-generated. One founder shared that a platform rebuild that would normally require a much larger team and a much longer timeline is now being completed by a much smaller group in a fraction of the time. Another team reported that more than 95% of all new code is already being written with AI tools. In the chat, one attendee summarized the new posture bluntly: “Shifted the whole business to AI first model.”

That phrase captures the heart of what this session was really about. The leaders furthest along are not treating AI as a helpful assistant. They are redesigning the development workflow around it.

What advanced teams are doing now

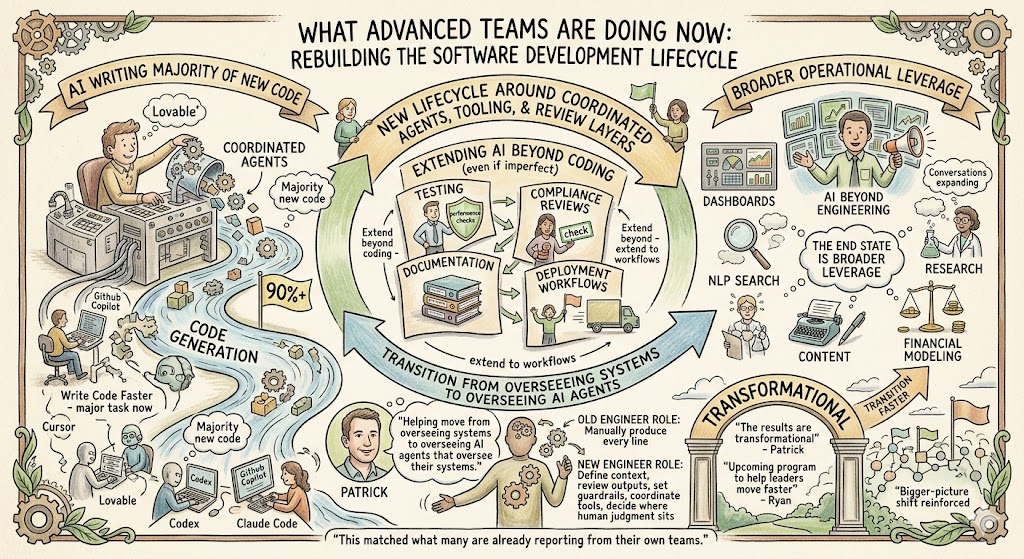

The most mature teams on the call were not just using AI to write code faster. They were beginning to rebuild the software development lifecycle around coordinated agents, tooling, and review layers.

A few patterns came up again and again:

- AI is now writing the majority of new code for many teams, often through tools like Claude Code, Codex, Cursor, GitHub Copilot, Lovable, and Google AI.

- Several companies are extending AI into testing, performance checks, compliance reviews, documentation, and deployment workflows, even if those systems are still imperfect.

- Multiple teams are already using AI beyond engineering, including for dashboards, NLP search, research, content, and financial modeling.

That last point matters because it shows how quickly this conversation is expanding beyond code generation. AI coding may be the doorway, but the end state is broader operational leverage.

Patrick captured that transition with one of the most useful lines of the session. He said he is now helping people move “from overseeing systems to overseeing AI agents that oversee their systems.”

That is a much bigger change than simply typing prompts into an IDE. It suggests that the role of an engineer is becoming less about manually producing every line and more about defining context, reviewing outputs, setting guardrails, coordinating tools, and deciding where human judgment still has to sit at the center.

Ryan reinforced that bigger-picture shift when he described the upcoming program as a way to help leaders move through this transition faster. “The results are transformational,” Patrick said later in the session, and that line did not feel exaggerated in context. It matched what many in the room were already reporting from their own teams.

The real bottleneck is moving

One of the sharpest observations in the chat was that the constraint for some companies is no longer product building. It is go-to-market.

That idea is important because it changes how founders should think about leverage. For years, the dominant assumption in SaaS was that product velocity was the main constraint. Build faster, ship faster, hire more engineers, and eventually growth follows. What this mastermind suggested is that for a growing number of teams, product velocity is no longer the scarcest resource.

That does not mean product stops mattering. It means the bottleneck may be shifting toward distribution, messaging, awareness, and demand capture.

That broader point lines up closely with Ryan’s larger body of work. Across his growth frameworks, he repeatedly argues that B2B SaaS growth becomes more predictable when companies stop relying on a single tactic and instead build a coordinated system across outbound, content, matched audiences, retargeting, and demand capture. In his writing, he describes the strongest modern growth motion as one built on market coverage, AI-powered outbound, content, and layered digital advertising working together.

That becomes even more relevant if AI sharply compresses product iteration cycles. If features can be shipped faster, then winning may depend less on whether you can build and more on whether your market sees you, trusts you, and understands why your product matters.

Ryan has been making that exact point in other contexts as well. In his growth writing, he notes that many founders underinvest in repeatable acquisition and that a durable business is not built by founder heroics alone.

This mastermind made that same lesson visible from a different angle: faster product creation raises the premium on strong go-to-market systems.

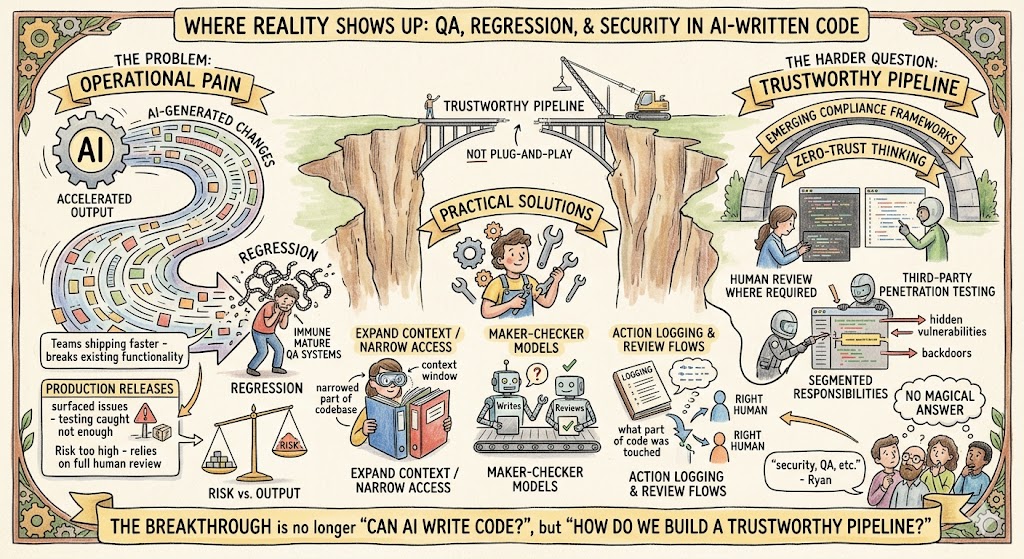

QA, regression, and security are where reality shows up

The session was not naïve. If the first half of the conversation was energized by speed, the second half was grounded by real operational pain.

The biggest issue raised repeatedly was regression. Teams are shipping faster, but they are also seeing AI-generated changes break existing functionality, especially inside larger or more interconnected codebases. One operator described production releases that repeatedly surfaced issues because the testing process had not caught enough before deployment. Another said their company still relies on full human review in certain parts of the codebase because the risk is too high otherwise.

That is the current tradeoff. Output has accelerated faster than quality assurance systems have matured.

Several practical themes emerged from that part of the discussion:

- Teams are expanding context windows or narrowing the part of the codebase each AI workflow is allowed to touch.

- Some are experimenting with maker-checker models, where one model or agent writes and another reviews.

- Others are logging every agent action and building review flows that route changes to the right human based on what part of the code was touched.

That part of the conversation was especially useful because it showed where the frontier really is. The breakthrough is no longer just “Can AI write code?” The harder question is now “How do we build a trustworthy pipeline around AI-written code?”

Ryan made that concern explicit when he invited the group to spend time on the problems people were running into with “security, QA, etc.”

The security discussion was even more revealing because it showed how unsettled the standards still are. Some teams are operating in environments where every line still needs human review for compliance reasons. Others are trying to navigate customer concerns around whether AI-generated code can introduce hidden vulnerabilities or backdoors. There was no magical answer in the room, and that honesty made the discussion better.

Instead of pretending the problem is solved, the group surfaced the current best responses: human review where required, third-party penetration testing, zero-trust thinking, segmented responsibilities, and emerging compliance frameworks.

That kind of realism is helpful. It keeps the conversation grounded. The opportunity is enormous, but it is not plug-and-play.

The economics are getting clearer

Another practical part of the call centered on AI spend per engineer.

One question in the chat asked whether teams were still finding that spend came in around $100 per engineer per month, or whether real usage was trending more toward $400 to $500 per person once pipelines and heavy usage were included. The responses suggested the higher number is increasingly common. Some people cited figures in the $200 to $300 range, while others were already seeing around $280, $400, or $500 monthly. Ryan replied with a memorable line: “Around $1000 per month isn’t crazy.”

That matters because it reframes how founders should think about this budget line.

If one engineer becomes two or three times more productive, then token spend stops looking like software overhead and starts looking more like leveraged capacity investment. Patrick reinforced that logic in discussion, arguing that the most advanced users are not thinking of these costs as merely expenses, but as leverage against headcount and time.

That does not mean teams should spend recklessly. It means they need to evaluate AI budgets against delivered output, reduced time to ship, improved coverage, and the opportunity cost of not adopting fast enough.

Ryan’s broader operating philosophy has a similar pattern. In other contexts, he argues that founders often underinvest in growth once they have product-market fit because they do not understand their economics well enough to scale with confidence.

A similar mistake may now be happening in engineering. Some teams may underinvest in AI infrastructure because they are looking at monthly tool cost in isolation rather than in relation to engineering velocity and market timing.

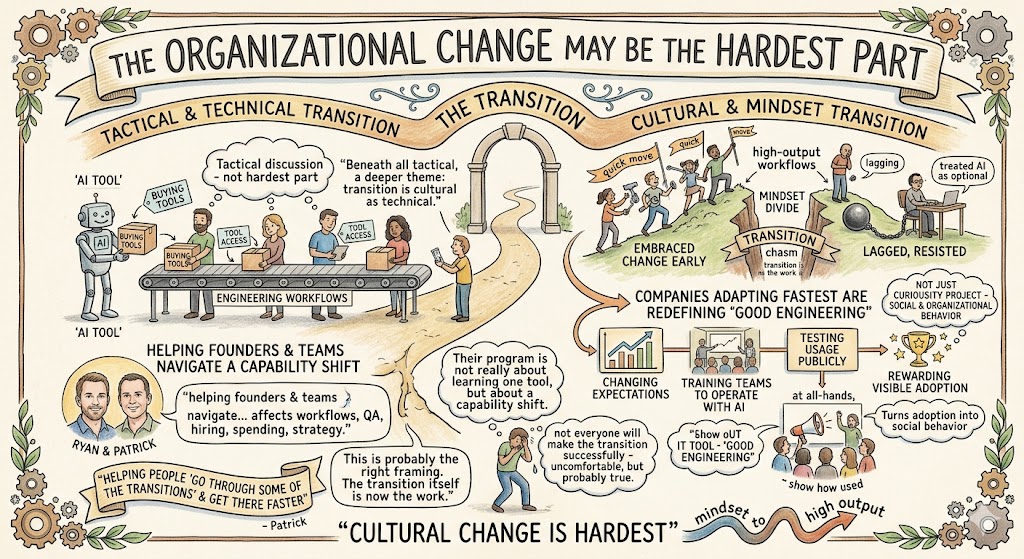

The organizational change may be the hardest part

Beneath all the tactical discussion, there was a deeper theme running through the mastermind: this transition is as much cultural as technical.

Several comments made clear that the biggest divide inside teams is not tool access. It is mindset. Some engineers embraced the change early and quickly moved into high-output workflows. Others lagged, resisted, or treated AI as something optional. A few leaders on the call were candid that not everyone will make the transition successfully.

That is uncomfortable, but probably true.

The companies adapting fastest are not merely buying tools. They are changing expectations. They are redefining what good engineering looks like. They are training teams to operate with AI, testing usage publicly, and rewarding visible adoption.

One of the strongest operational examples came from a company that required everyone to show how they were using AI at the next all-hands. That is smart because it turns AI adoption into a social and organizational behavior, not just a personal curiosity project.

This is also where Ryan and Patrick’s teaching role becomes clearer. The program they previewed is not really about learning one tool. It is about helping founders and teams navigate a capability shift that affects engineering workflows, QA, hiring, spending, and ultimately strategy.

Patrick described the mission simply: helping people “go through some of the transitions” and get there faster.

That is probably the right framing. The transition itself is now the work.

A few lessons that stood out most

If I had to condense the mastermind into a handful of practical lessons, these would be the ones that stayed with me:

- AI coding is no longer mainly for prototypes. Many teams are now using it for real production development.

- The breakthrough is creating new problems in QA, regression control, and security review faster than old workflows can keep up.

- The most advanced teams are not using one agent for everything. They are separating jobs across creation, review, testing, and compliance.

- Spend per engineer is rising, but many leaders increasingly view that spend as justified leverage rather than pure cost.

- As product gets easier to build, go-to-market execution may become the real limiting factor for many SaaS businesses.

That final point may be the most strategic of all. It connects this session back to Ryan’s larger worldview about how durable SaaS companies scale. If building gets faster, then audience ownership, content, outbound, ads, and systemized market presence only become more valuable.

Final thoughts

What made this mastermind valuable was not hype. It was specificity.

Ryan set the stage by saying, “This is our special community mastermind on AI coding and agentic engineering,” and the session lived up to that description. It was a practical, founder-level conversation about what is changing, what is already working, and where the risks still are.

The strongest conclusion from the call is not that every problem has been solved. It is that the operating model is changing anyway.

AI coding is increasingly capable of writing production-grade software. Agentic workflows are starting to reshape how teams think about development, testing, and review. Engineering output is accelerating. QA systems are scrambling to catch up. Security practices are still evolving. Budgets are being rewritten. Team expectations are shifting.

And perhaps most importantly, competitive advantage may be moving up the stack. As building becomes easier, deciding, distributing, and scaling become even more important.

Ryan closed the call by looking ahead to the formal program and the next mastermind, but the subtext of the whole session was already clear: this is not a side trend. It is becoming part of the new operating baseline for modern SaaS teams.