AI Coding & Agentic Engineering Mastermind Recap

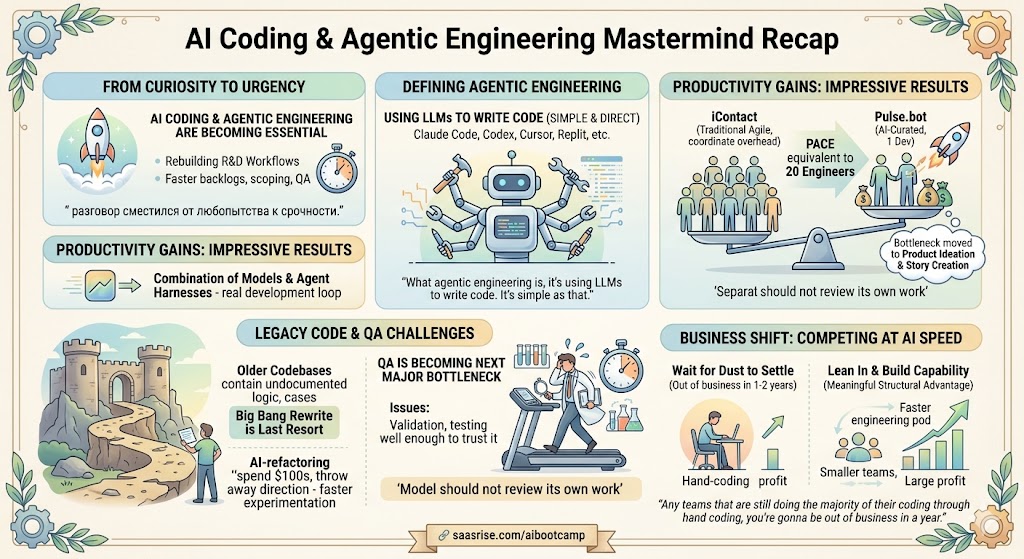

AI coding has moved from novelty to necessity. In this mastermind, what became clear is that the best SaaS teams are no longer using tools like Claude Code, Codex, and Cursor just to prototype ideas faster. They are starting to rebuild their actual R&D workflows around them. The opportunity now is not simply to write code faster. It is to ship faster, clear backlogs faster, and give smaller teams the output of much larger ones. The leaders who learn how to combine AI coding with strong product judgment, QA, and security discipline are going to have a major advantage over the next 12 to 24 months.

There are moments in technology when the conversation shifts from curiosity to urgency. This mastermind felt like one of those moments.

What struck me most in this session was not that AI coding is improving. We all know that already. It was that a growing number of founders, product leaders, and engineers are no longer treating it like an experiment on the side. They are starting to rebuild their actual R&D workflows around it. They are changing how features get scoped, how code gets written, how QA gets handled, and even how fast a backlog can disappear.

That is a big shift. And it is happening faster than most SaaS leaders realize.

If you want to go deeper on this with us, join the AI Coding Bootcamp at www.saasrise.com/aibootcamp.

We created it for SaaS teams that want to move beyond dabbling and actually learn how to build with AI coding and agentic engineering in a structured way. In the mastermind, I shared that we already had 25 companies enrolled in the bootcamp, which tells you how quickly this topic is moving from interesting to essential.

In the session, I explained it simply: “What agentic engineering is, it’s using LLMs to write code. It’s simple as that.” That definition matters because people often overcomplicate the term. We are not mainly talking here about customer-facing AI agents or generic workflow automation. We are talking about using tools like Claude Code, Codex, Cursor, Replit, and similar systems to materially change how software gets built.

Patrick Van Staveren, who co-led the mastermind with me, put his finger on what has changed over the last year. “The big shift is the combination of the models and then also the agent harnesses that come around them.” In other words, it is not just that the models got better. It is that they can now write code, test code, iterate, and work through mistakes in ways that are starting to resemble a real development loop rather than a one-off prompt.

That distinction is the whole story.

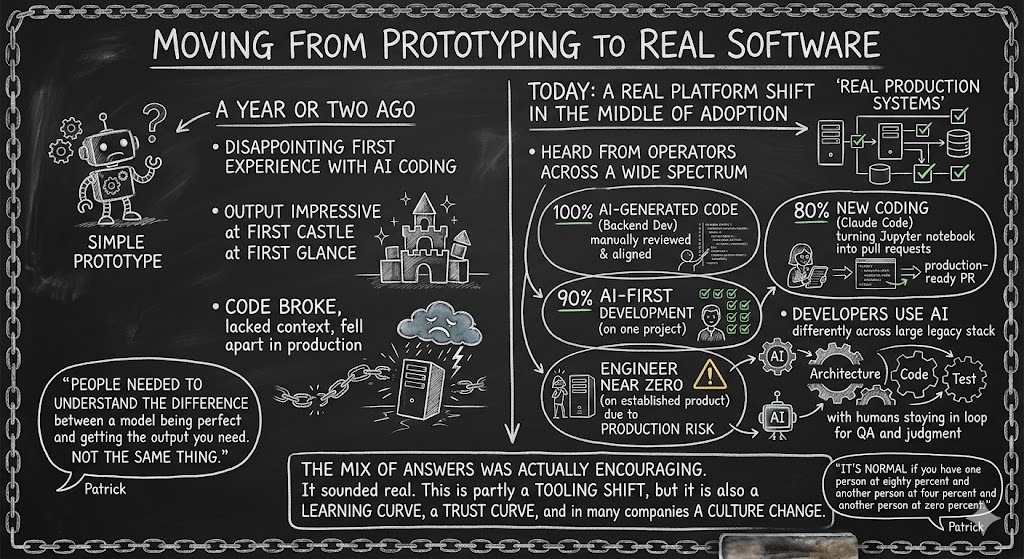

We are moving from prototyping to real software

One of the themes I wanted to unpack in the mastermind was the progression from simple prototypes to real production systems. A year or two ago, many teams had a disappointing first experience with AI coding. The output looked impressive at first glance, but the code often broke, lacked context, or fell apart in production. Patrick described that early stage well when he said people needed to understand the difference between a model being perfect and getting the output you need. Those are not the same thing.

Today, we are in a different place.

During the session, we heard from operators across a pretty wide spectrum:

- One leader described a backend developer working at essentially 100% AI-generated code, while still reviewing and aligning that code manually.

- Another said their team was around 90% AI-first development on one project, while a separate engineer on a more established product was still near zero because of production risk.

- A data scientist shared that Claude Code was handling roughly 80% of new coding, including turning Jupyter notebook work into production-ready pull requests.

- Another team described developers using AI differently across a large legacy stack, with agents helping architect, code, and test, while humans still stayed in the loop for QA and judgment.

That mix of answers was actually encouraging. It sounded real. Some teams are far ahead. Some are cautious. Some are experimenting in one corner of the stack before rolling it out further. That is exactly what a real platform shift looks like in the middle of adoption.

Patrick made another point I thought was important: “It’s normal if you have one person at eighty percent and another person at four percent and another person at zero percent.” A lot of leaders underestimate the human side of this change. This is partly a tooling shift, but it is also a learning curve, a trust curve, and in many companies a culture change.

The productivity gains are getting hard to ignore

I shared my own experience on the call because I think founders need real reference points, not abstractions.

At iContact, we had around 60 engineers at scale and used a very traditional agile and scrum development model. It worked for its time, but it was slower, heavier, and far more dependent on coordination overhead than what is now possible.

In contrast, on Pulse.bot, the AI-curated news aggregation product I have been building with one developer, I said this on the mastermind: “I’m now developing at a pace in terms of quality code push to production per week that would be equivalent to about twenty engineers previously at iContact.” That is not a throwaway line. That is my lived experience of the shift.

Now, is that true for every team, every product, every engineer, and every legacy stack? Of course not. But it is directionally true enough that SaaS leaders should pay very close attention.

I also shared that with our current workflow, my real bottleneck is no longer engineering throughput. It is product ideation and story creation. Our developer is often finishing user stories faster than I can define the next ones. That is a wild change if you have spent years operating in environments where product and engineering backlogs always felt heavy and slow.

One participant reinforced that point with a story from an insurance company where he said the team had effectively cleared out its backlog and now spent mornings reviewing pull requests generated overnight. Whether every detail of that model proves durable or not, the signal is clear: the constraint is moving.

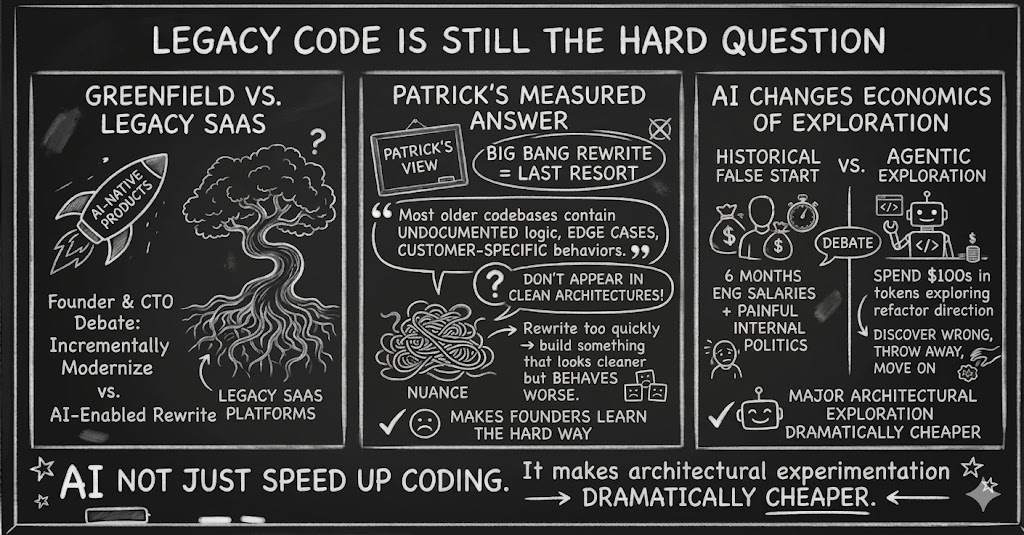

Legacy code is still the hard question

Greenfield AI-native products are one thing. Legacy SaaS platforms are another.

That is why one of the most useful parts of the mastermind was the discussion around older codebases. Founders and CTOs are rightly asking whether they should incrementally modernize or pursue some kind of AI-enabled rewrite.

Patrick gave a measured answer, and I think it is the right one. “The last resort is the Big Bang rewrite.” He pointed out that most older codebases contain a huge amount of undocumented business logic, edge cases, and customer-specific behaviors that do not show up in clean architecture diagrams. If you rewrite too quickly without reverse engineering all of that nuance, you risk building something that looks cleaner but behaves worse.

That matches what many SaaS founders have learned the hard way.

At the same time, AI changes the economics of exploration. Patrick made the point that with agentic coding, you can spend the equivalent of a couple hundred dollars in tokens exploring a major refactor direction, discover it is wrong, throw it away, and move on. Historically, that kind of false start might have cost a team six months of engineering salaries and a painful internal political debate.

That is one of the most underappreciated benefits of this shift. AI does not just speed up coding. It makes architectural experimentation dramatically cheaper.

QA is becoming the next major bottleneck

As teams get faster at generating code, the pressure moves downstream.

That came through clearly in the mastermind. Several participants said some version of the same thing: the issue is no longer only writing code quickly. The issue is validating it well enough to trust it. That includes unit testing, integration testing, UI testing, system testing, and user-level experience testing.

One participant described using Manus to act more like a human QA layer, clicking through an application, testing workflows, checking UX consistency, and even reporting page load problems. Another talked about using MCP and Playwright-based flows to generate acceptance tests. These were not hypothetical examples. These were teams actively trying to figure out how to keep QA from becoming the new choke point.

Patrick summarized the principle well when he noted that the model should not review its own work in the same context window. That is a subtle but important point. You need separation in context, and in some cases even model separation, to get useful review behavior.

This is where I think the next year of tooling innovation will be particularly intense. The teams that figure out how to combine AI coding speed with trustworthy QA and security processes are going to create a major operating advantage.

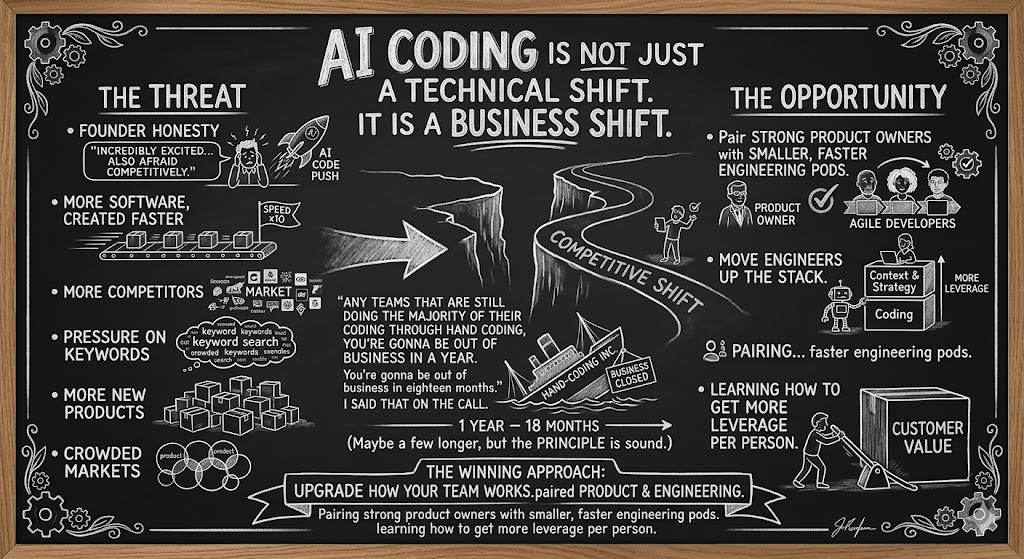

This is not just a technical shift. It is a business shift.

One of the most honest comments in the mastermind came from a founder who said he was incredibly excited by AI coding but also afraid of what it means competitively. More software being created faster means more competitors, more pressure on keywords, more new products, and more crowded markets. That is a real concern.

But I still come back to the same conclusion.

“Any teams that are still doing the majority of their coding through hand coding, you’re gonna be out of business in a year. You’re gonna be out of business in eighteen months.” I said that on the call because I believe the competitive dynamics are now moving that quickly. Maybe for a few companies that timeline will be longer, but the principle is sound. If your competitors are shipping five to ten times faster with similar quality, they will eventually outrun you on features, experiments, customer requests, and market responsiveness.

That does not mean replacing engineers with reckless automation. It means upgrading how your team works. It means moving engineers up the stack. It means pairing strong product owners with smaller, faster engineering pods. It means learning how to get more leverage per person.

That is the opportunity here.

A few takeaways I’d emphasize coming out of the session

If I had to reduce the mastermind down to a few practical conclusions, it would be these:

- AI coding is no longer just for prototypes. Many teams are now using it in real production environments.

- The biggest gains are not only in writing code faster, but in compressing the full path from idea to shipped feature.

- Legacy modernization is possible, but it requires discipline and thoughtful reverse engineering.

- QA, testing, and security are now the next major bottlenecks.

- Teams that learn this early will have a meaningful structural advantage over teams that wait.

Patrick closed with a line I thought was worth remembering: “Use it. Learn it. These tools are gonna change.” That is exactly right. The interfaces will change. The models will improve. The tooling stack will keep shifting. But the answer is not to wait for the dust to settle. The answer is to build capability while the field is still forming.

And I will add one of my own beliefs here as well: the tools we have today are likely the worst we will ever use from this point forward. They will get better. They will get cheaper. They will get more integrated into how software teams operate. That is why this is the right moment to lean in.

The companies that treat agentic engineering as a side experiment may learn something. The companies that build a real operating system around it are going to move much faster.

If you want help making that transition, join us in the AI Coding Bootcamp at www.saasrise.com/aibootcamp. That is exactly why we built it.